One of the most contentious debates in neuroscience has revolved around the question of whether the adult human brain can produce new neurons. Though there is evidence that rodents maintain a population of immature neurons throughout their lives, confirming this phenomenon in humans is troublesome, namely due to post-mortem tissue degradation and the lack of specific molecular markers. A new study by Disouky et al. (2026), published in Nature, carries out a deep dive into this process. Disouky et al. reveal that the “birth” of new neurons not only occurs in the adult human hippocampus but that its decline in Alzheimer’s disease is dictated by changes in the cell’s epigenetic landscape. In other words, while the sequence of the DNA remains the same, the chemical tags and structural packing of the genome changes, effectively deciding which genes are turned on or off.

To settle the debate, researchers analyzed over 355,000 individual cell nuclei from the hippocampi of young adults, healthy seniors, and people with Alzheimer’s. They discovered a clear assembly line in the brain where starter cells, known as neural stem cells, begin a transformation into neuroblasts. These cells then become Immature Neurons before finally graduating into mature granule neurons that are fully integrated into memory circuits. The team used a predictive calculation called RNA velocity to prove that these cells actually move through these stages, confirming that the adult human brain maintains a pool of neural stem cells. RNA velocity, by taking into account the concentration of various RNA populations, can project the dynamics within the cell (La Manno et al., 2018). In other words, a cell’s stage in development can be determined by what types of RNA it is producing.

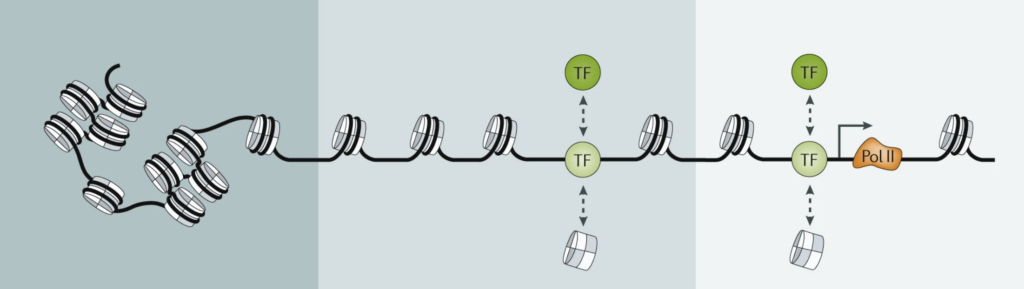

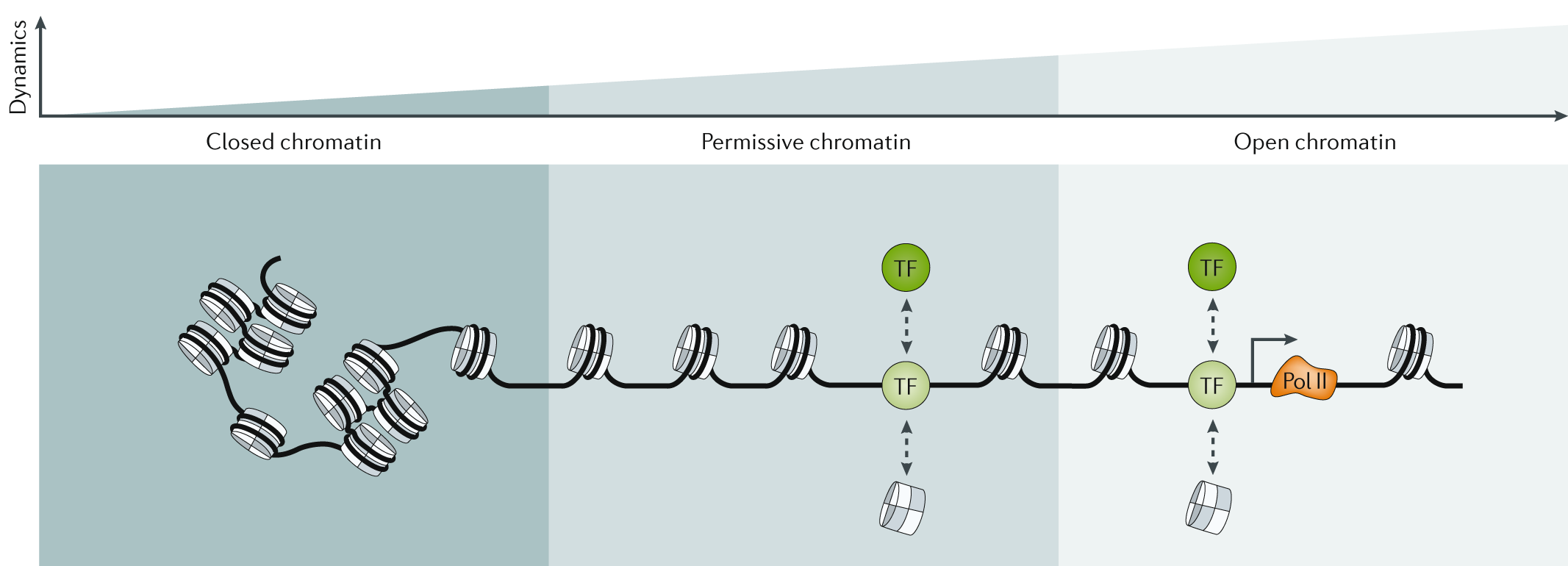

The study’s most important discovery involves epigenetics, which dictates how the brain’s internal switches are managed. If DNA is like a massive library of books (genes), then epigenetics determines which books are actually open and readable. The researchers found that in Alzheimer’s disease, the problem isn’t just that cells are dying, but that the books for making new neurons are being slammed shut. This is known as a change in chromatin accessibility (Klemm et al., 2019). In Alzheimer’s patients, the number of immature neurons is slashed significantly compared to healthy individuals. Interestingly, in people with preclinical Alzheimer’s—those with early symptoms of Alzheimer’s—these DNA locks are beginning to appear. So, while the DNA itself remains the same, its expression differs.

When the authors looked at a third population group known as SuperAgers (SA)—people over 80 years old with the memory capacity of someone in their fifties—they found a distinct profile of neurogenesis, new neuron formation. The brains of SuperAgers contained a significantly greater number of immature neurons compared to those with Alzheimer’s. Even after excluding potential outliers, the researchers observed a 2.5-fold increase in immature neurons in the SuperAger group compared to other cohorts. This suggests there is a “resilience signature” of neurogenesis that may play a role in maintaining exceptional memory capacity despite advanced age. This signature is primarily characterized by maintained chromatin accessibility in regions that are typically “locked” or downregulated in the Alzheimer’s brain.

Ultimately, this research shifts the focus of Alzheimer’s study from simple cell death to the underlying gene regulatory networks that govern how cells function and grow. By identifying the specific “activator” and “repressor” switches (transcription factors) that are active in SuperAgers versus those that are shut down in Alzheimer’s, the study provides a roadmap for future medical interventions. For example, targeting the specific chromatin regions that govern synaptic plasticity could potentially prevent or mitigate the deterioration of neurogenesis seen in dementia. While the study notes limitations due to the high variability of human brain samples and limited sample sizes, the findings highlight the critical role of epigenetics as a more definitive indicator of cognitive health than traditional gene expression alone. This suggests that the future of treating cognitive decline may lie in opening up the brain’s internal library in order to restore its natural ability to regenerate and remember.

References

Disouky, A., Sanborn, M. A., Sabitha, K. R., Mostafa, M. M., Ayala, I. A., Bennett, D. A., Lu, Y., Zhou, Y., Keene, C. D., Weintraub, S., Gefen, T., Mesulam, M., Geula, C., Maienschein-Cline, M., Rehman, J., & Lazarov, O. (2026). Human hippocampal neurogenesis in adulthood, ageing and Alzheimer’s disease. Nature, 652(8112), 1264–1273. https://doi.org/10.1038/s41586-026-10169-4

Klemm, S. L., Shipony, Z., & Greenleaf, W. J. (2019). Chromatin accessibility and the regulatory epigenome. Nature Reviews Genetics, 20(4), 207–220. https://doi.org/10.1038/s41576-018-0089-8

La Manno, G., Soldatov, R., Zeisel, A., Braun, E., Hochgerner, H., Petukhov, V., Lidschreiber, K., Kastriti, M. E., Lönnerberg, P., Furlan, A., Fan, J., Borm, L. E., Liu, Z., Van Bruggen, D., Guo, J., He, X., Barker, R., Sundström, E., Castelo-Branco, G., . . . Kharchenko, P. V. (2018). RNA velocity of single cells. Nature, 560(7719), 494–498. https://doi.org/10.1038/s41586-018-0414-6